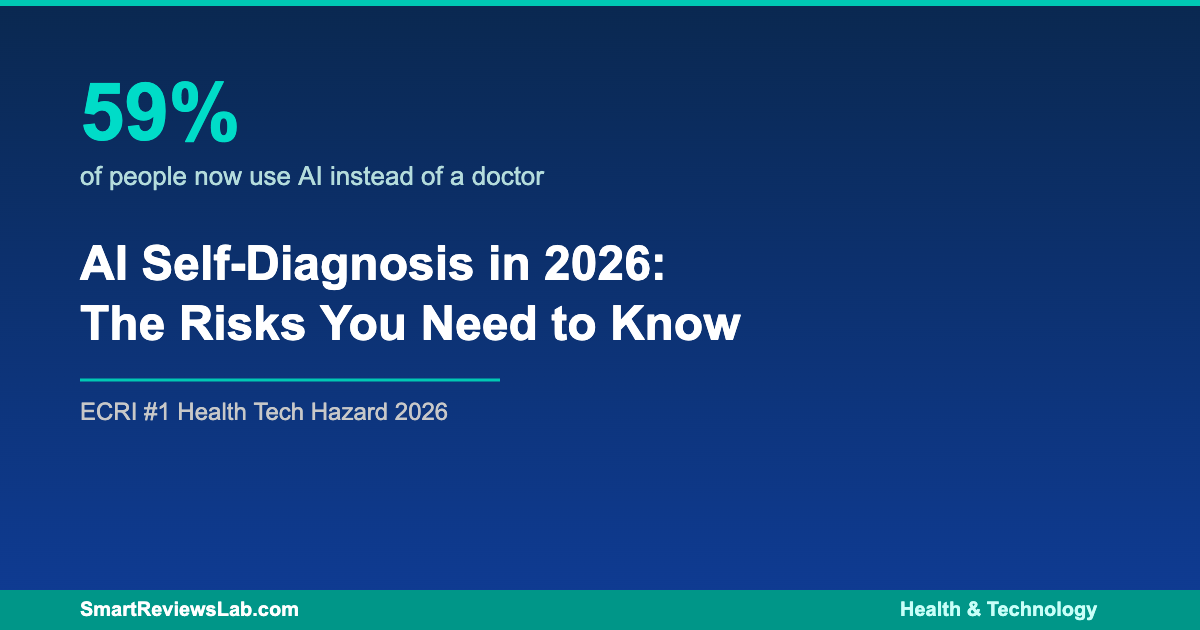

If you’ve ever typed your symptoms into ChatGPT or asked an AI chatbot whether that persistent headache could be something serious, you’re far from alone. A January 2026 survey found that 59% of adults now use AI tools to self-diagnose health conditions before — or instead of — visiting a doctor. Meanwhile, the ECRI Institute has named the misuse of AI chatbots for medical advice as the number one health technology hazard of 2026.

The convenience is undeniable, but the risks are real. Here’s what you need to know about AI self-diagnosis, why experts are raising alarms, and how to use these tools safely.

The Rise of AI as Your First Doctor

The numbers tell a dramatic story. In the UK, nearly three in five adults regularly consult AI tools about physical or mental health symptoms, with searches for “what is my illness?” increasing by 85% since January 2025. In the United States, roughly one in three Americans has turned to an AI chatbot for health information in the past year, according to a KFF Tracking Poll from early 2026.

Young adults are leading the charge — 85% of 18-to-24-year-olds report regularly using AI for health queries. But this isn’t just a young person’s trend: more than 35% of people aged 65 and over are also turning to AI chatbots for medical guidance. The driving forces include long wait times for doctor appointments, rising healthcare costs, and the always-available nature of AI tools.

Why Experts Are Sounding the Alarm

The ECRI Institute, an independent patient safety organization, placed AI chatbot misuse at the top of its annual health technology hazards list for 2026. Their researchers found specific examples of chatbots suggesting incorrect diagnoses, recommending unnecessary tests, promoting substandard medical supplies, and even inventing nonexistent anatomy when asked medical questions.

A study from the University of Oxford published in February 2026 further warned that large language models present significant risks when used for medical advice, largely because they generate text by predicting word patterns rather than truly understanding medical context. The result is that AI chatbots can deliver false or incomplete health information with an authoritative tone that makes the advice seem trustworthy.

Perhaps most concerning is the follow-up gap. Research shows that 42% of people who use AI for physical health questions never consult a doctor afterward, and 58% of those seeking mental health guidance from AI never speak to a professional. These individuals receive their only health input from a tool that cannot perform examinations, order tests, or be held accountable for misdiagnosis.

Where AI Health Tools Actually Help

Despite the warnings, AI health tools aren’t entirely without merit. More than one in ten users (11%) reported that AI helped their health situation “a great deal,” with over 40% saying it helped “somewhat.” AI chatbots can be useful for understanding general health concepts and medical terminology, getting a preliminary sense of whether symptoms warrant urgent care, preparing better questions for doctor visits, and accessing health information outside of office hours.

The key distinction experts make is between using AI as a starting point versus treating it as a final answer. When used to supplement — not replace — professional medical care, AI health tools can play a constructive role in personal health management.

How to Use AI for Health Questions Safely

If you’re going to use AI chatbots for health-related queries, experts from Yale, Duke, and Oxford recommend following several important guidelines. First, never use AI as your sole source of medical advice. Treat chatbot responses as preliminary information, not a diagnosis. Always verify important health information with a licensed healthcare provider.

Second, be specific but skeptical. Provide clear descriptions of your symptoms, but question any confident-sounding diagnosis. AI chatbots don’t have access to your medical history, can’t perform physical exams, and may generate plausible-sounding but inaccurate information.

Third, watch for emergency red flags. If you experience symptoms like chest pain, difficulty breathing, sudden severe headaches, or signs of stroke, skip the chatbot entirely and seek immediate medical attention. AI tools are not designed for emergency triage.

Finally, check the sources. If an AI chatbot cites studies or medical guidelines, verify those sources independently. Chatbots can hallucinate citations that don’t exist or misrepresent real research findings.

The Bottom Line

AI self-diagnosis tools are here to stay, and their adoption will likely keep growing in 2026 and beyond. The technology offers genuine convenience, especially for those facing barriers to traditional healthcare access. But the gap between what AI chatbots can do and what patients expect them to do remains dangerously wide.

The smartest approach in 2026 is to treat AI health tools the way you’d treat advice from a well-read friend — potentially useful as a starting point, but never a substitute for professional medical expertise. Your health is too important to leave entirely in the hands of an algorithm that can’t tell the difference between a common cold and something far more serious.